Testing option for OEM labs, vendors, and the tech press. Wanted it to be a free, easily accessible, easy-to-run, useful, and appealing Our goal was to offer a benchmark that could compare the performance of almostĪny web-enabled device, using scenarios created to mirror real-world tasks. Space that was already crowded with a variety of specialized measurement tools. Topics that you’d like us to cover in possible future white papers, please let us know!Īnnounce that it’s been 10 years since the initial launch of WebXPRT! In earlyĢ013, we introduced WebXPRT as a unique browser performance benchmark in a market If you have questions about any of our white papers, or suggestions for Hope that the XPRT white paper library will prove to be a useful resource for

Reproduces the exact calculations WebXPRT uses to produce test scores.

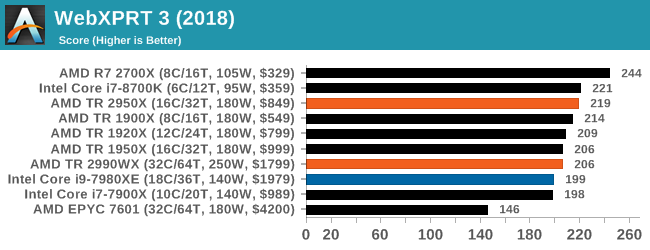

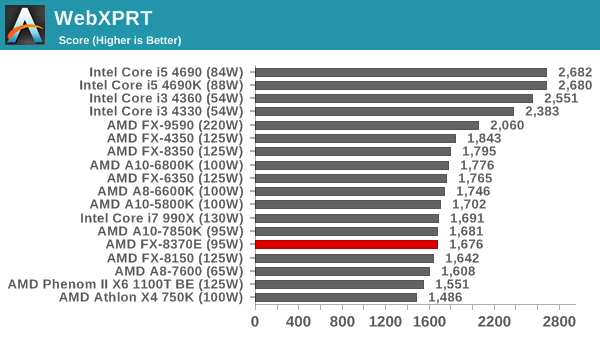

The white paper’s discussion of the results calculation process, we publishedĪ results calculation spreadsheet that shows the raw data from a sample test run and Interval and how it differs from typical benchmark variability. Translate raw timings into scores, and explains the benchmark’s confidence Score, provides an overview of the statistical techniques WebXPRT uses to WebXPRT 4 uses to calculate the individual workload scenario scores and overall Tests, and how to submit results for publication.Ĭompanion WebXPRT 4 results calculation white paper explains the formulas that Includes explanations of the benchmark’s scoring methodology, how to automate Information about the benchmark’s harness, HTML5 and WebAssembly (WASM)Ĭapability checks, and the structure of the performance test workloads. Paper describes the design and structure of WebXPRT 4, including detailed Library is a great place to find some answers. Questions about any aspect of one of the XPRT benchmarks, the white paper Make certain development decisions, and how the benchmarks work. XPRT users with more information about how we design our benchmarks, why we We started publishing white papers to provide XPRT white paper library currently contains 21 white papers that we’ve Today, we’re focusing on the XPRT white paper library. In the technology assessment industry, it’s not unusual for people to claim that any given benchmark contains hidden biases, so we take preemptive steps to address this issue by publishing XPRT benchmark source code, detailed system disclosures and test methodologies, and in-depth white papers. What are your thoughts on browser testing? We’d love to hear from you.As part of our commitment to publishing reliable, unbiased benchmarks, we strive to make the XPRT development process as transparent as possible. In today’s crowded tech marketplace, that piece of information provides a great deal of value to many people. Simply put, a device with a higher WebXPRT score is probably going to feel faster to you during daily use than one with a lower score. While lab techs, manufacturers, and tech journalists can all glean detailed data from WebXPRT, the test’s real-world focus means that the overall score is relevant to the average consumer. For example, when Eric discussed a similar topic in the past, he said the tests in JetStream 1.1 provided information that “can be very useful for engineers and developers, but may not be as meaningful to the typical user.”Īs we do with all the XPRTs, we designed WebXPRT to test how devices handle the types of real-world tasks consumers perform every day. Some scores help you to understand the performance you can expect from a device in your everyday life, and others measure performance in scenarios that you’re unlikely to encounter. Some scores reflect a very broad set of metrics, while others assess a very narrow set of tasks. These approaches are all valid, and it’s important to understand exactly what a given score represents. Some focus on very low-level JavaScript tasks, some test additional technologies such as HTML5, and some are designed to identify strengths or weakness by stressing devices in unusual ways. The reason that the benchmarks rank the browsers so differently is that each one has a unique emphasis and tests a specific set of workloads and technologies. Edge came out on top in JetStream and SunSpider, Opera won in Octane and WebXPRT, and Chrome had the best results in Speedometer and PCWorld’s custom workloads. Browser speed sounds like a straightforward metric, but the reality is complex.įor the comparison, PCWorld used three JavaScript-centric test suites (JetStream, SunSpider, and Octane), one benchmark that simulates user actions (Speedometer), a few in-house tests of their own design, and one benchmark that simulates real-world web applications ( WebXPRT). As we’ve noted about similar comparisons, no single browser was the fastest in every test. PCWorld recently published the results of a head-to-head browser performance comparison between Google Chrome, Microsoft Edge, Mozilla Firefox, and Opera.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed